AI-generated sexual violence is spreading fast — and major tech companies are helping power it. We’re mapping the ecosystem behind it.

Our bodies are OURS, online or off. Help us track and expose the deepfake ecosystem.

Here’s what we know

Every single day women and girls are bearing the brunt of this abuse, and companies like Google, Apple, and xAI are fueling the violence while profiting off our pain, all in the name of our “AI future.”

We reached a tipping point in January 2026 when an estimated 3 million individuals, including 23,000 children, were sexually deepfaked through the use of xAI’s Grok chatbot. And that was just one app among many.

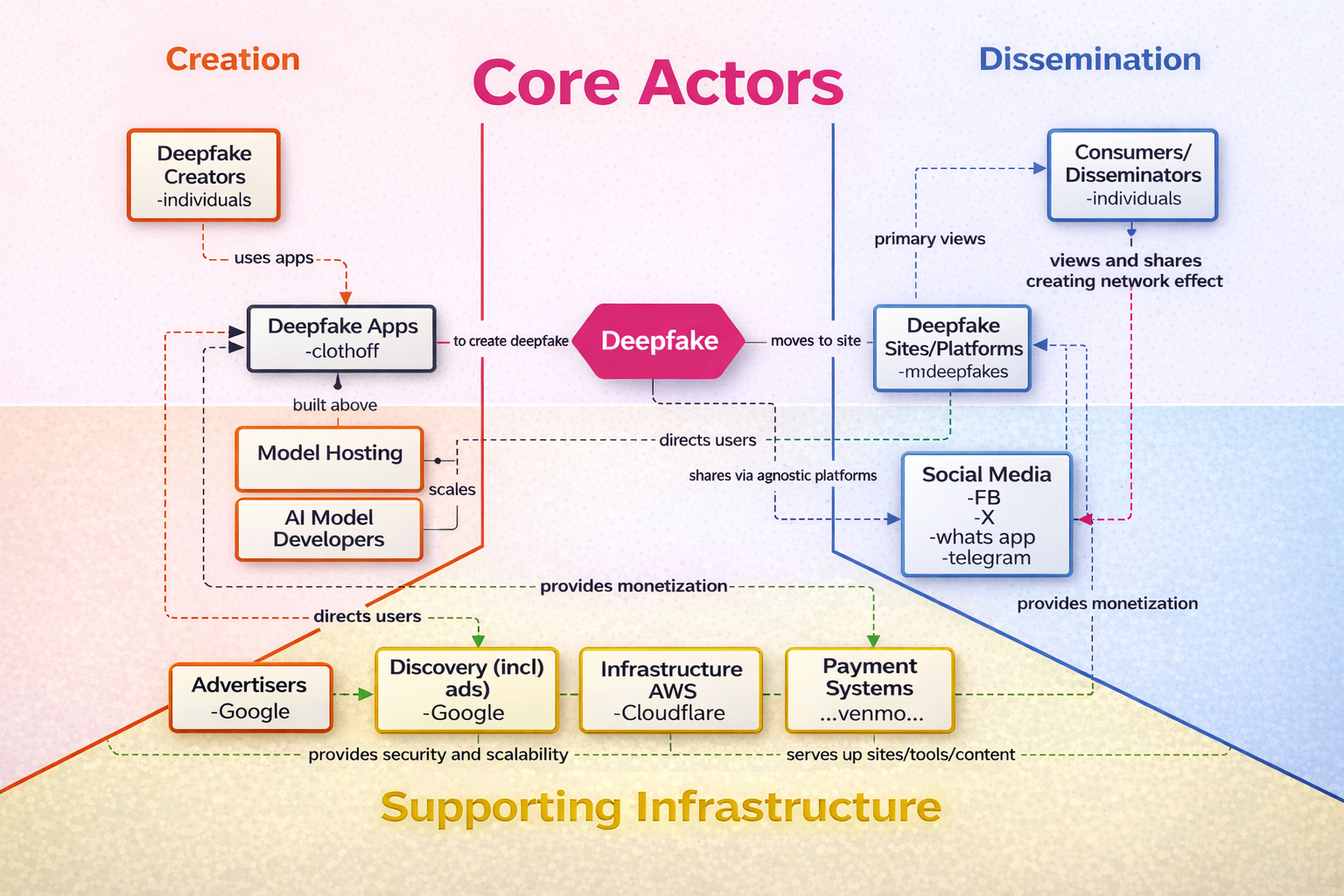

The ecosystem of deepfake abuse is layered and complicated, but we’re set on simplifying it. There are two main categories of enablers:

Core actors

The supporting infrastructure

We can think of the core actors as the obvious perpetrators, like AI apps set up to “declothe” and owners of websites dedicated to deepfake abuse.

We can think of the supporting infrastructure as the tech machines that enable and platform the core actors, like Google Search Engine and Apple’s App Store.

Who profits from this?

Top targets

It’s easier to identify the “supporting infrastructure” of the deepfake abuse ecosystem than the “core actors” because we live in a tech monopoly. Here are ten top companies supercharging this violent ecosystem:

-

Google Search drives the majority of traffic to websites dedicated to platforming non-consensual sexual deepfakes. Google Play Store also continues to platform xAI’s chatbot Grok, social media platform X (which powers Grok), and countless other “nudify” apps despite the widespread use of these tools to create and disseminate sexually abusive content, including child sexual abuse material (CSAM).

-

Apple’s App Store also continues to platform xAI’s chatbot Grok, social media platform X, and countless other “nudify” apps despite the widespread use of these tools to create and disseminate sexually abusive content, including CSAM.

-

xAI is the company that owns social media platform X and Grok, which together were responsible for allowing users to create and disseminate non-consensual sexual deepfakes of an estimated 3 million people, including 23,000 children, in just a two week period from the end of 2025 through the start of 2026.

-

Grok is embedded in social media platform X. This embedding meant that X users could both easily create sexually abusive deepfakes AND disseminate them far and wide on the social media platform. Once again, the result was millions of people being victimized. Outside of Grok, X has also long had a problem of leaving up AI-generated sexually abusive content.

-

GitHub is a platform for developers that lets users store and share code, and collaborate on writing code. The company has tried to crack down on deepfake abuse, but it isn’t going well. Programs used by creators of non-consensual sexual deepfakes continue to evade GitHub’s detection, meaning code for sexually deepfaking real people is basically up for grabs online.

-

OpenAI (which owns and runs ChatGPT) also owns and runs a video-making version of ChatGPT called Sora 2. We golf clap for OpenAI putting safeguards in place for Sora 2 that prevent creating explicit content and prohibit using someone’s real likeness. But even the company itself says that the app will evade safeguards and generate sexual deepfakes about 1.6% of the time. In addition, there are a lot of gross fetish videos on Sora 2, as well as grey area videos like kids putting themselves in videos with porn stars. All around just really not good.

-

It’s safe to say that much of Facebook and Instagram today is either AI slop or an ad. Right in that mix is a mountain of deepfakes—including non-consensual sexual—that even its own Oversight Board says the company is not doing enough to keep up with. Maybe they should have thought of that when Zuckerberg gutted Meta’s content moderation system back in January 2025 days before Trump’s inauguration… Oh also, Meta AI’s new ‘Vibes’ feature is flooding its platforms with sexual deepfake videos of children. We can’t make this up.

-

Cloudflare is essentially a web infrastructure company. Put simply, it hosts websites, among other things. An audit of 85 AI websites dedicated to “nudifying” found that Cloudflare and Amazon “provide hosting or content delivery services for 62 of the 85 “nudifiers” (ew) websites. No doubt that brings in a good chunk of change to both Cloudflare and Amazon.

-

Same as above. Amazon Web Service platforms some of the worst offending websites dedicated to abusive deepfake content. This violent ecosystem would not be as big as it is if massive tech companies that basically own the internet weren’t profiting off of these websites and tools being platformed and readily available.

-

Last year, The Indicator crunched some numbers and arrived at the estimate that the current economy of “nudifying” or “delothing” apps is worth over $36 million. Where’s that money moving through? Payment processors, which are actively enabling the the monetization of this heinous ecosystem.

Help us expose the deepfake ecosystem.

We’re looking for tips on actors not yet represented on our map — especially those creating, hosting, or profiting from AI-generated sexual abuse.

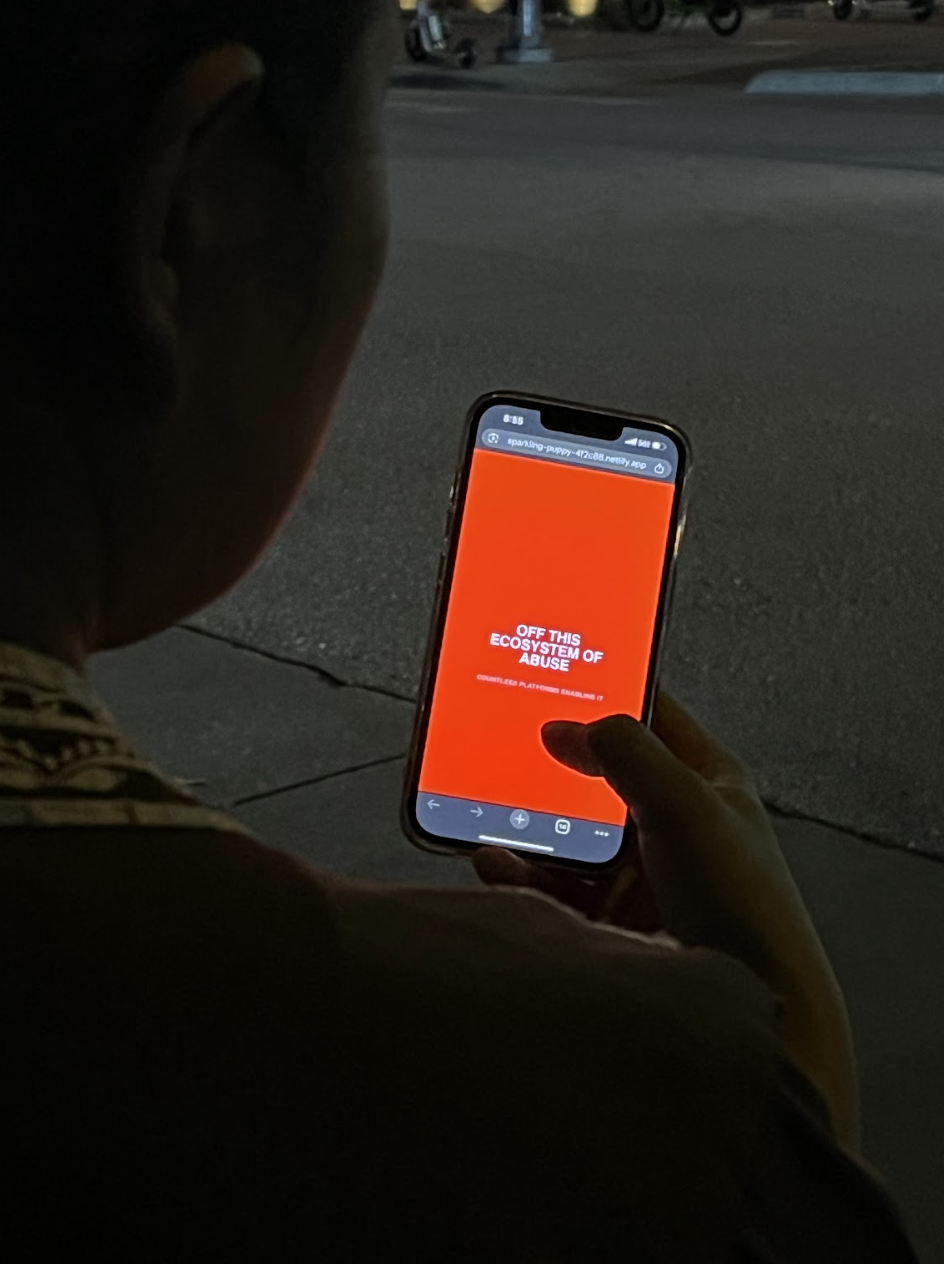

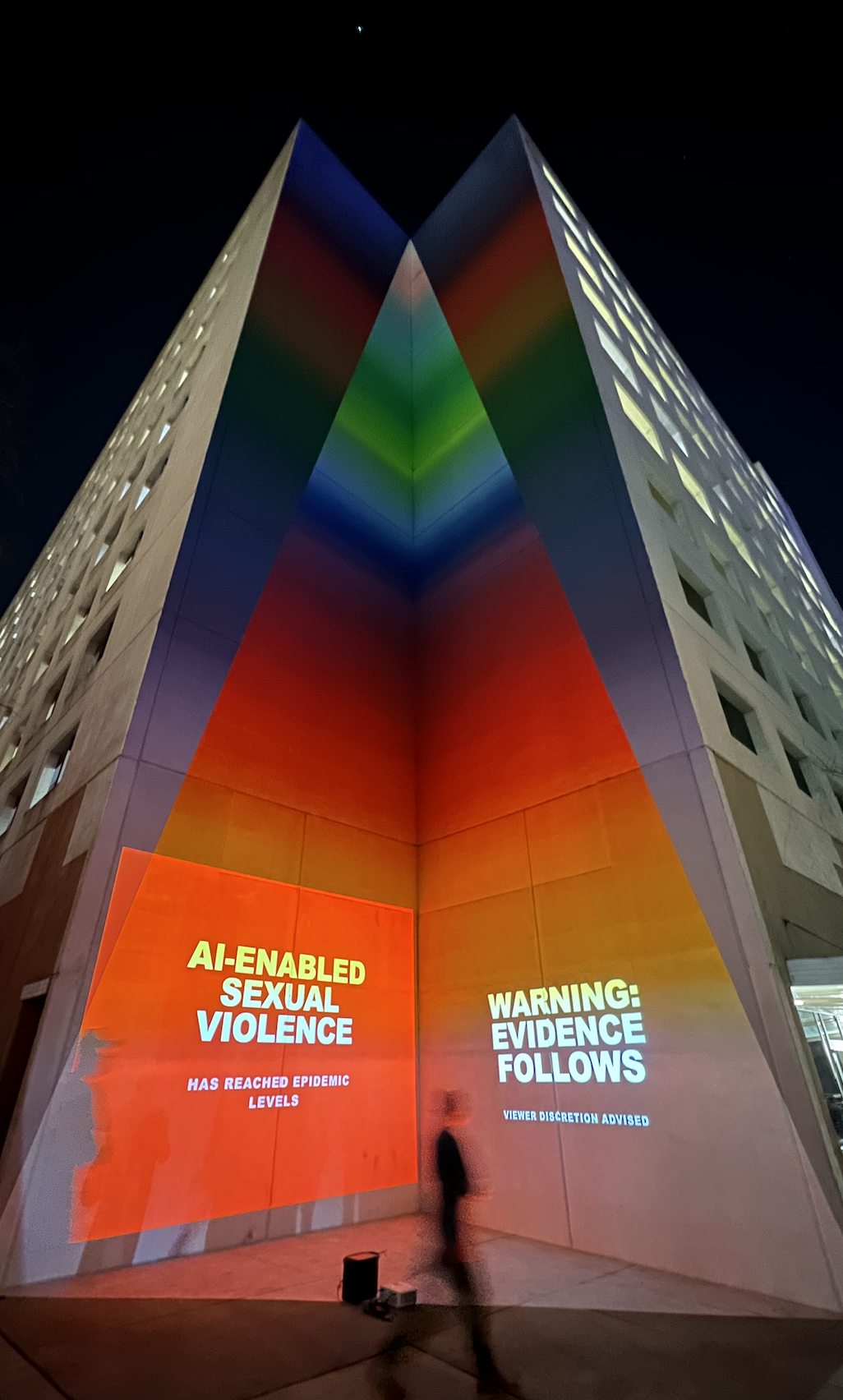

This campaign is a collaborative project between Repro Uncensored and Ultraviolet launching at South by Southwest 2026.

It features a public WiFi intervention prompting audiences to confront real deepfake prompts and citywide projections across Austin exposing the ecosystem enabling AI-generated sexual abuse.

This project is made possible with the support of Essentials Creative, SisterSong, and Chalk Back.